GLOC_The Human Diver

Contributor

This originally appeared in The Human Diver blog

"A man with a conviction is a hard man to change. Tell him you disagree and he turns away. Show him facts or figures and he questions your sources. Appeal to logic and he fails to see your point." - Leon Festinger

Cognitive Dissonance has been defined as the psychological pain of accepting facts which are counter to our views which then prevents an open and rational cycle of improvement.

Recently I re-read Black Box Thinking by Matthew Syed (a book I’d thoroughly recommend). The book uses aviation safety as the premise for improving patient safety by looking at the ways in which data has improved the former - the data from aircraft black boxes and cockpit voice recorders showed investigators what the pilots saw and experienced and how it could have made sense to them at the time, despite what hindsight bias and outcome bias would them to believe. Furthermore, data from the aircraft systems would allow reconstructions to take place in the simulator to see lessons could be really learned to prevent future occurrences. Cactus 1549 (Sully) is a classic example of this.

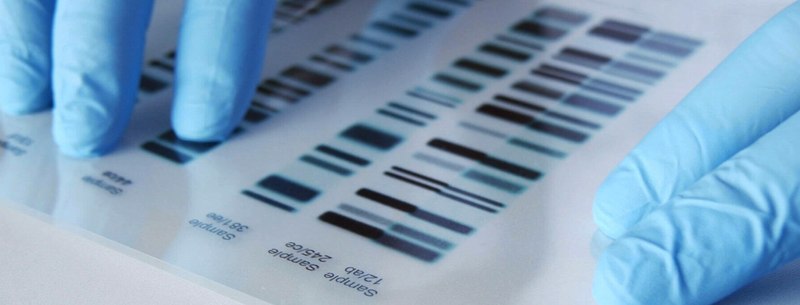

Within the book, Syed uses several examples of cognitive dissonance to show that even when evidence is presented, sometimes it cannot be believed. The accounts range from cult followers who expected a UFO to arrive and when it didn’t, they still believed their leader was correct, to numerous cases of rape where the use of DNA showed that the accused couldn’t have done it and yet they were in jail because of ‘confessions’. One such case an appeal ended up with the innocent man spending another 10 years in jail as the judges and barristers who’d put him away refused to accept he was innocent despite the contrary DNA evidence. This article gives a number of similar cases of 'unindicted co-ejaculators', cases which will lead to you shaking your head.

Syed points out that cognitive dissonance is more likely to impact those who feel that the decisions they make define them because to do otherwise, may have a negative impact on their reputation. He then goes on to say that those most susceptible are likely to be extremely bright people, highly educated and in positions of power. This Wiki page gives many more examples.

Given the variety of viewpoints (many of which are amplified in social media), there are numerous examples of cognitive dissonance in diving.

1. Nitrox is/was the devil’s gas or voodoo gas. (It is safe to breathe, but be aware of Oxygen Toxicity risks)

2. It is not possible to teach flat trim and neutral buoyancy at open water level diving as it is too difficult for the student to learn. (It is possible)

3. It is not possible to teach student divers at open water level to primary donate as it is too difficult and causes confusion. (It is possible)

4. Effective gas planning and gas management at open water level are too complicated. (It is not)

5. ‘My’ agency is better than ‘your’ agency based on the experience of your one instructor. (Quality of agency cannot be effectively determined by students, their contact with the agency is normally very limited.)

6. ‘My' rebreather (any other configuration) is better than ‘your’ rebreather (configuration) without understanding the system in which it operates. (There are so many biases and use cases that it is not possible to make a black and white case.)

7. Cave diving is highly dangerous. (It can be, but then if you are cave trained, then the risks are just relative.)

8. When accidents happen, it is the choice of the diver who made a stupid mistake. (Human factors research from aviation, nuclear and oil and gas would show this is not true.)

9. As senior agency staff, I can’t talk about the risks I took and the rules I broke when I was younger because it will mean I can’t be critical of today’s practises. (The past is the past, we cannot change things then and we often do unwise things without the power for foresight!)

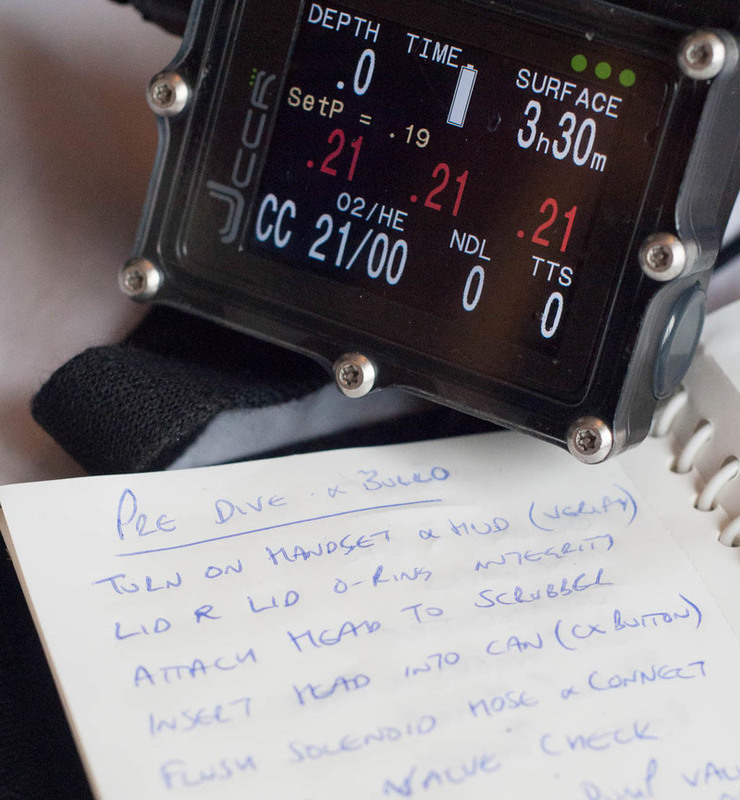

10. If you need to use a checklist to dive a CCR, you shouldn’t be diving one. (Checklists are there to reduce the likelihood of errors, they cannot eliminate them. However, poor checklists can make diving less safe.)

"A man with a conviction is a hard man to change. Tell him you disagree and he turns away. Show him facts or figures and he questions your sources. Appeal to logic and he fails to see your point." - Leon Festinger

Cognitive Dissonance has been defined as the psychological pain of accepting facts which are counter to our views which then prevents an open and rational cycle of improvement.

Recently I re-read Black Box Thinking by Matthew Syed (a book I’d thoroughly recommend). The book uses aviation safety as the premise for improving patient safety by looking at the ways in which data has improved the former - the data from aircraft black boxes and cockpit voice recorders showed investigators what the pilots saw and experienced and how it could have made sense to them at the time, despite what hindsight bias and outcome bias would them to believe. Furthermore, data from the aircraft systems would allow reconstructions to take place in the simulator to see lessons could be really learned to prevent future occurrences. Cactus 1549 (Sully) is a classic example of this.

Within the book, Syed uses several examples of cognitive dissonance to show that even when evidence is presented, sometimes it cannot be believed. The accounts range from cult followers who expected a UFO to arrive and when it didn’t, they still believed their leader was correct, to numerous cases of rape where the use of DNA showed that the accused couldn’t have done it and yet they were in jail because of ‘confessions’. One such case an appeal ended up with the innocent man spending another 10 years in jail as the judges and barristers who’d put him away refused to accept he was innocent despite the contrary DNA evidence. This article gives a number of similar cases of 'unindicted co-ejaculators', cases which will lead to you shaking your head.

Syed points out that cognitive dissonance is more likely to impact those who feel that the decisions they make define them because to do otherwise, may have a negative impact on their reputation. He then goes on to say that those most susceptible are likely to be extremely bright people, highly educated and in positions of power. This Wiki page gives many more examples.

Given the variety of viewpoints (many of which are amplified in social media), there are numerous examples of cognitive dissonance in diving.

1. Nitrox is/was the devil’s gas or voodoo gas. (It is safe to breathe, but be aware of Oxygen Toxicity risks)

2. It is not possible to teach flat trim and neutral buoyancy at open water level diving as it is too difficult for the student to learn. (It is possible)

3. It is not possible to teach student divers at open water level to primary donate as it is too difficult and causes confusion. (It is possible)

4. Effective gas planning and gas management at open water level are too complicated. (It is not)

5. ‘My’ agency is better than ‘your’ agency based on the experience of your one instructor. (Quality of agency cannot be effectively determined by students, their contact with the agency is normally very limited.)

6. ‘My' rebreather (any other configuration) is better than ‘your’ rebreather (configuration) without understanding the system in which it operates. (There are so many biases and use cases that it is not possible to make a black and white case.)

7. Cave diving is highly dangerous. (It can be, but then if you are cave trained, then the risks are just relative.)

8. When accidents happen, it is the choice of the diver who made a stupid mistake. (Human factors research from aviation, nuclear and oil and gas would show this is not true.)

9. As senior agency staff, I can’t talk about the risks I took and the rules I broke when I was younger because it will mean I can’t be critical of today’s practises. (The past is the past, we cannot change things then and we often do unwise things without the power for foresight!)

10. If you need to use a checklist to dive a CCR, you shouldn’t be diving one. (Checklists are there to reduce the likelihood of errors, they cannot eliminate them. However, poor checklists can make diving less safe.)